Vercel Hobby hit 1M/1M Edge Requests. The bug was a Cache-Control header.

❯ cat --toc

- Plain version: cache HIT ≠ free

- The wall: April 27 noon

- Part 1: Three dead ends

- "Traffic spike?"

- "Bots?"

- "Image bloat?"

- Part 2: must-revalidate × cache HIT

- The math

- Why this default exists

- Part 3: 30-day timeline, one chart says it all

- Part 4: 3 lines

- Part 5: Footgun, immutable is genuinely immutable

- Part 6: Rolling 30-day, and an inbox I never read

- The embarrassing finding

- Part 7: What this article itself might break

- Part 8: I changed my mind mid-draft, upgraded

- What's next: May 30 follow-up

- References

TL;DR

ai-muninn.com hit 1,002,906 / 1,000,000 Vercel Hobby Edge Requests this month. Cause: Next.js + Vercel default to Cache-Control: public, max-age=0, must-revalidate on /public/*. Even cache HITs trigger conditional GETs that count as edge requests. Fix: 3 lines in next.config.ts adding immutable for slug-stable paths. Real lesson: I dismissed three Vercel notifications because my notification inbox had 60 unread items. The cache header was a footgun. The inbox was the actual bug.

Plain version: cache HIT ≠ free

You think browser cache means no server hit? Half right.

If the response carries cache-control: public, max-age=0, must-revalidate, the browser sends a conditional GET on every page load asking "is my version still current?" Even when the server replies "yes, unchanged" (HTTP 304, no body), that round trip still counts as one request.

Vercel Hobby gives you 1 million requests on a rolling 30-day window. Not a calendar month. One blog page = 1 HTML + 1 OG image + 1 cover video + N inline images. A reader reloading = all of them again. With ~30 articles, the math is nasty.

The wall: April 27 noon

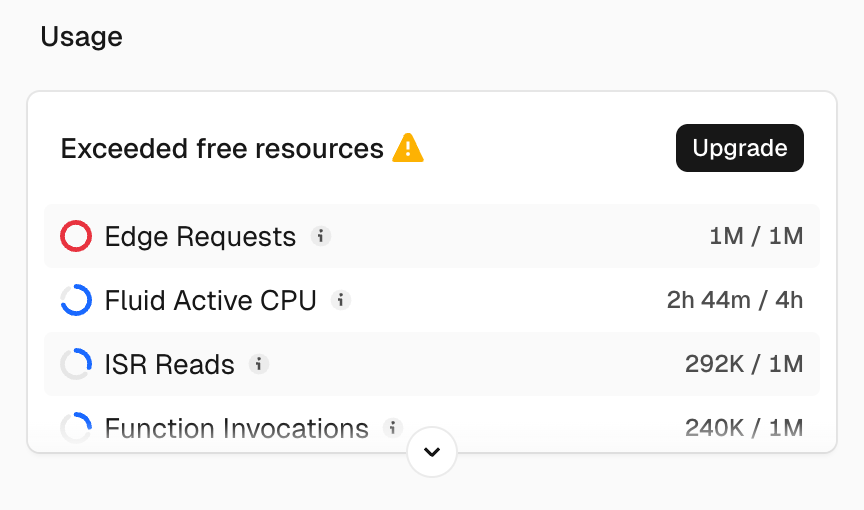

I opened the Vercel dashboard to push a new post.

Exceeded free resources ⚠️

1,002,906 / 1,000,000 Edge Requests. Fluid CPU 2h44m / 4h, ISR Reads 292K / 1M, Function Invocations 240K / 1M. Everything else green. The problem is isolated to one line.

But sitting with it for a minute: this number means people are reading. ai-muninn is a small bilingual blog about local LLMs and AI infra. Pegging Vercel's free quota is technically a footgun, socially it's good news. So the question split: did 1M humans actually visit, or is my config inflating things 10×?

Both turned out to be true.

Part 1: Three dead ends

"Traffic spike?"

GA4 28-day sessions: 1,733. Down slightly from last month. Not it.

"Bots?"

I tried to pull Vercel runtime logs to inspect User-Agents. Hobby plan retains runtime logs for 1 hour, capped at 4,000 rows (docs). Dashboard showed ~200 entries covering a single minute. The spike was days old. Dead end.

"Image bloat?"

I hadn't pushed any larger media. And edge requests count request count, not bandwidth. Image size doesn't matter for this metric.

Three dead ends, 30 minutes.

Part 2: must-revalidate × cache HIT

Last resort, curl -I an OG image:

$ curl -I https://ai-muninn.com/og/some-post.png

cache-control: public, max-age=0, must-revalidate

x-vercel-cache: HIT

Two lines that shouldn't coexist. Took three seconds to register.

x-vercel-cache: HIT means Vercel CDN had it cached, didn't hit origin. ✓

max-age=0, must-revalidate means the browser must revalidate every load, treating its own cache as stale on arrival. Even if the server returns 304 Not Modified without body, the round trip still costs one edge request.

Vercel meters by request count, not bandwidth, not cache miss. CDN HIT, 304 response, user sees cached image. That conditional GET still bills you.

The math

Per blog page = 1 HTML + 1 OG image + 1 cover video + N inline images. A reader visiting 5 posts = at least 25 edge requests, even with browser cache — because max-age=0 forces revalidation on every load.

ai-muninn's 27 articles × variable inline images + sitewide OG/favicon/cover video → 50K-100K daily requests is plausible.

Why this default exists

Next.js default for /public/* is Cache-Control: public, max-age=0. Vercel's edge layer adds must-revalidate so deploys reach users immediately (Vercel Cache-Control docs).

Sensible for build artifacts you might re-upload with the same name. Wrong for blog static media where filenames are slug-stable and never re-used.

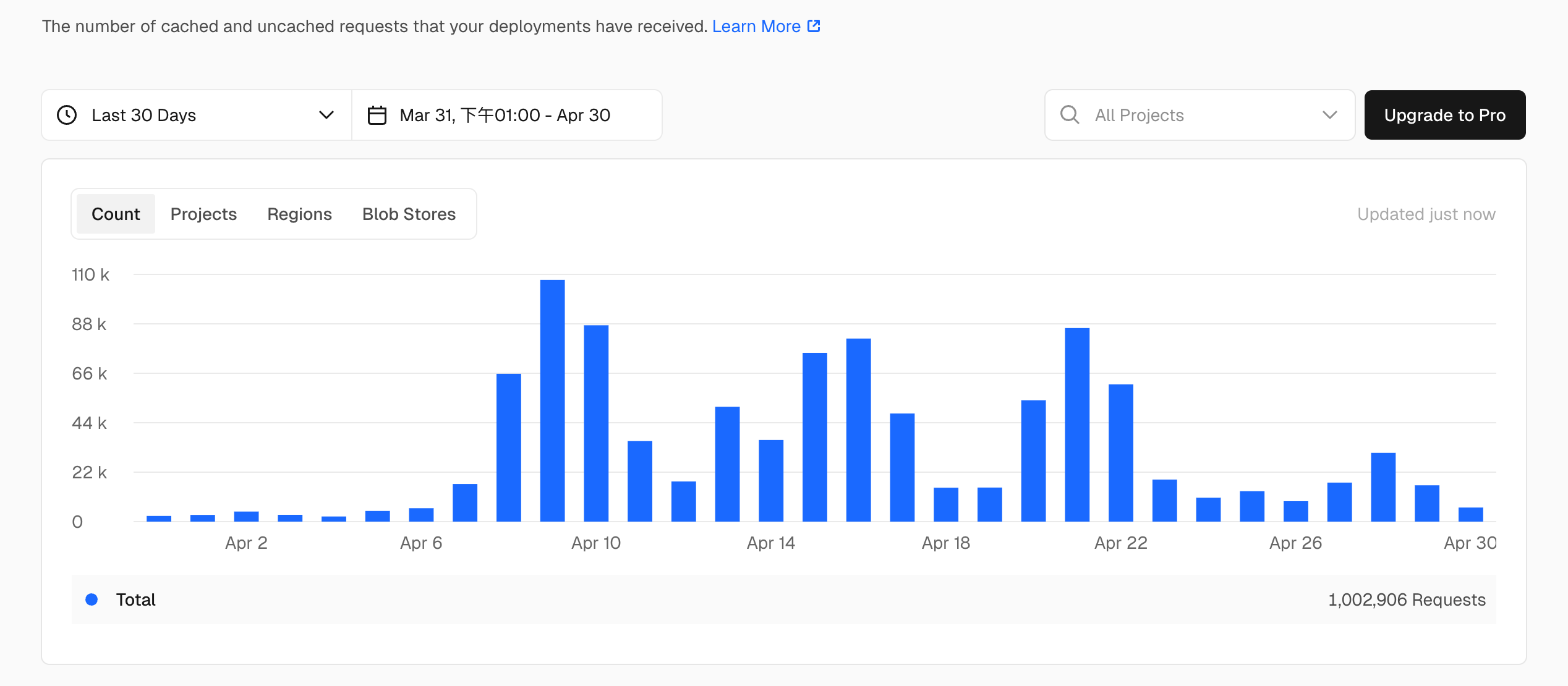

Part 3: 30-day timeline, one chart says it all

Cleaner than any text:

| Range | Requests | Note |

|---|---|---|

| Apr 1–6 | < 10K/day | Quiet start |

| Apr 9 | ~110K | Peak day |

| Apr 9–10 | ~200K total | 20% of monthly quota in 2 days |

| Apr 13–15 | ~200K total | Another wave |

| Apr 21–22 | ~135K total | Final wave |

| Apr 27 | fix deployed | ↓ |

| Apr 28–30 | 5–15K/day | ~90% daily cut |

The Apr 9 / 13-15 / 21-22 spikes weren't random. Probably some combination of social shares (Threads, Reddit), AI crawlers (the llms.txt was deployed in early April), and bot traffic. Hobby's 1-hour log retention couldn't tell me which. Free tier is fine for learning, not for incident debug.

Part 4: 3 lines

next.config.ts:

import type { NextConfig } from 'next'

const IMMUTABLE_CACHE = 'public, max-age=31536000, immutable'

const nextConfig: NextConfig = {

async headers() {

return [

{ source: '/videos/:path*', headers: [{ key: 'Cache-Control', value: IMMUTABLE_CACHE }] },

{ source: '/og/:path*', headers: [{ key: 'Cache-Control', value: IMMUTABLE_CACHE }] },

{ source: '/images/:path*', headers: [{ key: 'Cache-Control', value: IMMUTABLE_CACHE }] },

]

},

}

Verify:

$ curl -I https://ai-muninn.com/og/some-post.png

cache-control: public, max-age=31536000, immutable

x-vercel-cache: HIT

immutable (RFC 8246) tells browsers not to revalidate during the freshness lifetime. A year. Force-reload still triggers revalidation (user override is preserved by spec), but normal navigation skips the conditional GET entirely. Edge request bill drops to near-zero for these paths.

Part 5: Footgun, immutable is genuinely immutable

If you ever upload a new file under the same path, users will see the cached old version until the year-long TTL expires or they hard-refresh. To bust:

- Use slug-tied filenames you'll never reuse (my OG:

<slug>.png) - Or append

?v=Nto the asset URL when content changes - In a pinch, remove the source rule, deploy, wait for cache eviction, re-add

For a blog where every asset is uniquely named per post, this trade is fine. For a marketing site that swaps hero.jpg weekly, don't go immutable. Use max-age=86400 (one day) instead.

Part 6: Rolling 30-day, and an inbox I never read

Fix deployed. Quota didn't recover.

"But tomorrow is May 1, won't the quota reset?"

No. I assumed the same. Vercel community confirmed it's a rolling 30-day window, not calendar month.

Today (Apr 30) I'm at 1M. The Apr 9 spike (~110K) doesn't roll out of the window until May 9. The Apr 22 spike rolls out around May 22. Until then, ai-muninn runs throttled. Some requests blocked, some features limited.

The embarrassing finding

After upgrading to Pro and finally opening Vercel's notification inbox, I saw:

- Apr 21: 75% usage email. Sent on time. 9 days before the wall hit.

- Apr 30 (today): 100% usage email.

- Inbox total: 60 unread, including multiple deploy failures from mid-April that I'd also missed.

The 75% notification arrived with 9 days of buffer. If I'd read it that day, I'd have caught the Apr 21-22 spike before it pushed me over. The alert system worked. I treated the inbox as /dev/null.

The real fix isn't building a webhook (Vercel already sends one). It's routing alerts to where I actually look: Telegram, Slack, anywhere that breaks through the wall of unread.

Part 7: What this article itself might break

A few honest risks before publication:

Streisand effect. Publishing an article about Edge Request quotas could attract enough attention to blow the quota again. If you're reading this, your page view contributed 1 OG + 1 video + ~5-8 inline images to my next 30 days.

The "300K monthly" projection might be wrong. I'm extrapolating from 3 days of post-fix data (Apr 28-30, ~10K/day). May could spike again from this very article.

immutable will burn someone. Readers copy-pasting these 3 lines will eventually re-upload an asset under the same name and wonder why Twitter shows the old version. Read Part 5 carefully.

Part 8: I changed my mind mid-draft, upgraded

Original plan was: "Daily 10K post-fix, monthly ~300K, well within Hobby. $20/mo is overkill. Just add a webhook for alerts." Clean, frugal, matched the "3 lines saved you" hook.

Then Codex + Gemini fact-check plus actually clicking Upgrade rewrote the conclusion three times:

| Step | What I thought | Reality |

|---|---|---|

| Draft 1 | "Vercel has no alerts" | Hobby has 75/100% email, I just didn't read inbox |

| After fact-check | "Pro doesn't include 30-day logs" | Pro includes 1M Observability Plus events/mo, no extra cost (Apr 2 2026 changelog removed the OP base fee) |

| After upgrading | "Vercel's alerts came too late" | Alerts came 9 days early, I never opened inbox |

What Pro actually delivered:

| Item | Hobby (free) | Pro ($20/mo) |

|---|---|---|

| Edge Requests | 1M / 30d rolling | 10M |

| Runtime log retention | 1h / 4000 rows | 30 days (via OP, 1M events/mo included) |

| Spend cap / custom alerts | ❌ | ✅ |

| Unblock current throttle | wait until May 9–22 | immediate |

| Buffer if article goes viral | risk | 10x |

For someone writing through a quota wall, $20/mo to stop worrying + immediate unblock + post-mortem log access is reasonable. For a smaller hobby blog, Hobby + forwarding Vercel inbox to Telegram is probably better. There's no universal answer.

What's next: May 30 follow-up

Three things to settle by end of May:

- Did the fix work? Projected daily 5–15K, monthly 200–400K

- Was Pro worth it? $20/mo × 1 month, then decide whether to keep

- Did this article re-trigger the spike? Streisand check (Part 7)

If May 30 data looks closer to 1M than 300K, I had the wrong root cause and will publish a follow-up admitting it.

If the projection holds, those 3 lines + $20 might be the highest-ROI commit pair in ai-muninn history.

References

FAQ

- How does Vercel Hobby's 1M Edge Requests limit work?

- Rolling 30-day window. Every request hitting Vercel's edge counts, including HTTP 304 Not Modified responses to conditional GETs. Cache HITs at the CDN don't help if the browser is forced to revalidate. Once you exceed 1M, free resources get throttled until the spike days roll out of the 30-day window. Not a calendar month reset.

- Why does cache HIT still cost an Edge Request?

- If the response carries `cache-control: public, max-age=0, must-revalidate`, browsers send a conditional GET on every page load to verify freshness. Even when the server returns 304 Not Modified without re-sending the body, that round trip is one edge request. Vercel meters request count, not bandwidth.

- What's the default Cache-Control for Next.js public/?

- Next.js itself sets `Cache-Control: public, max-age=0` on `/public/*` files. Vercel's edge layer adds `must-revalidate` so new deploys reach users immediately. The combination forces browsers to revalidate on every load. Fine for build artifacts that get overwritten, costly for slug-stable static media (OG images, blog covers, embedded videos).

- How do you fix it?

- Add explicit Cache-Control rules in `next.config.ts` `headers()` for paths where filenames are slug-stable. Use `public, max-age=31536000, immutable`. Browsers skip revalidation for a year. Footgun: if you ever re-upload the same filename, users get the old cached version until the cache expires or hard-refresh. Use slug-tied filenames or `?v=N` to bust cache.

- Should you upgrade to Pro to fix this?

- Pro ($20/mo) gives you immediate unblock from the rolling-window throttle, 10x edge request buffer (10M instead of 1M), Pro spend management (custom alert thresholds + spend caps), and the ability to enable Observability Plus, which includes 30-day log retention with 1M events/month included before overage pricing kicks in (per [Vercel's April 2026 changelog](https://vercel.com/changelog/no-base-fee-for-observability-plus) removing the OP base fee). For most blog-scale projects, that 1M events headroom is unreachable. Hobby's built-in 75%/100% email alerts are real, but if your inbox is /dev/null they don't help.